Stanford AI Index 2026 finds that responsible AI is not keeping pace with AI capability

++ Stalking victim sues OpenAI over ChatGPT’s alleged role; Maine advances temporary ban on new large AI data centers; China-backed groups call for open global AI governance & more...

Today’s highlights:

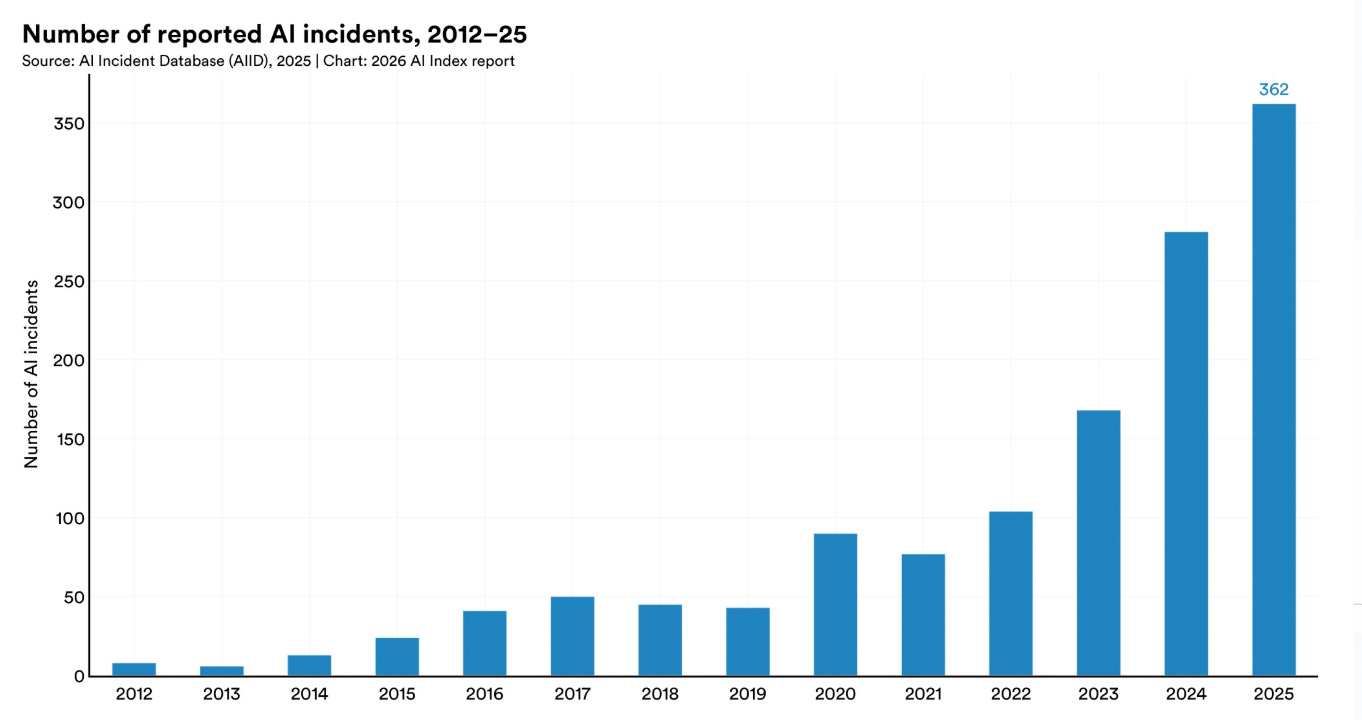

Stanford HAI’s 2026 AI Index Report says AI progress is still accelerating, with industry producing more than 90% of notable frontier models in 2025 and adoption spreading quickly across companies, students, and consumers. The report says the performance gap between U.S. and Chinese AI models has narrowed sharply, even as the U.S. remains ahead in private investment and top-tier model output, while China leads in publications, patents, and industrial robot installations. It also highlights growing risks, noting that responsible AI efforts are lagging behind capability gains, documented AI incidents rose to 362, up from 233 in 2024, and trust in governments to regulate the technology remains uneven. At the same time, AI infrastructure and policy are becoming more strategic, with the U.S. dominating data center capacity, Taiwan’s TSMC central to advanced chip supply, and more countries pursuing AI sovereignty through national strategies and supercomputing investments.

Top Takeaways

AI capability is not plateauing. It is accelerating and reaching more people than ever.

The U.S.-China AI model performance gap has effectively closed.

The United States hosts the most AI data centers, with the majority of their chips fabricated by one Taiwanese foundry.

AI models can win a gold medal at the International Mathematical Olympiad but cannot reliably tell time—an example of what researchers call the jagged frontier of AI.

Responsible AI is not keeping pace with AI capability, with safety benchmarks lagging and incidents rising sharply.

The United States leads in AI investment, but its ability to attract global talent is declining.

AI adoption is spreading at historic speed, and consumers are deriving substantial value from tools they often access for free.

Formal education is lagging behind AI, but people are learning AI skills at every stage of life.

AI sovereignty is becoming a defining feature of national policy, but capabilities remain uneven, even as open-source development helps to redistribute who participates.

AI experts and the public have very different perspectives on the technology’s future, and global trust in institutions to manage AI is fragmented.

At the School of Responsible AI (SoRAI), we help both individuals and organizations build practical, real-world AI literacy and Responsible AI capability through structured, engaging, and action-oriented programs. For individuals, this includes AI Literacy, globally relevant certification training such as AIGP, RAI, and AAIA, as well as career transition and advisory support for professionals moving into AI governance roles. For organizations, we offer customized enterprise AI literacy training, Responsible AI strategy and governance setup, and AI assurance support to help teams understand, operationalize, and validate AI responsibly. At the core of SoRAI is a progressive three-layer approach: first helping people understand AI, then build the right governance foundations, and finally validate readiness through assurance and audit-focused thinking. Want to learn more? Explore our AI Literacy programs, certification trainings, and career support offerings, or write to us for customized enterprise solutions.

⚖️ AI Ethics

OpenAI expands Trusted Access for Cyber as GPT-5.4-Cyber rolls out to vetted defenders

OpenAI has expanded its Trusted Access for Cyber program to reach thousands of verified individual defenders and hundreds of teams that protect critical software, while also releasing GPT-5.4-Cyber, a version of GPT-5.4 tuned for defensive cybersecurity work. The company said the model is more permissive for legitimate security tasks such as vulnerability research and binary reverse engineering, but it will initially be available only to vetted security vendors, organizations, and researchers. OpenAI said TAC, first launched in February, now includes more access tiers tied to stronger identity verification, with the highest tier unlocking GPT-5.4-Cyber. Reuters noted the move came about a week after Anthropic disclosed its own controlled cybersecurity model effort, Mythos, under Project Glasswing.

Stalking Victim Sues OpenAI, Alleging ChatGPT Fueled Abuser’s Delusions and Ignored Repeated Warnings

A woman identified as Jane Doe has sued OpenAI in California, alleging ChatGPT amplified her former partner’s delusions and helped fuel months of stalking and harassment against her. The lawsuit says OpenAI received multiple warnings, including an internal safety flag tied to possible mass-casualty weapons activity, but restored the user’s account and failed to act on her abuse report. Doe is seeking punitive damages and a court order requiring OpenAI to block the user, preserve his chat logs, and alert her if he tries to return to the platform. The case adds to wider scrutiny of whether AI chatbots can reinforce dangerous mental-health crises and expose companies to growing legal liability.

Maine Lawmakers Pass Bill for Temporary Ban on New Large AI Data Centers

Maine lawmakers have passed a bill that would temporarily block construction of new data centers using more than 20 megawatts of power until October 2027, pending approval from Gov. Janet Mills. The proposed moratorium is aimed at giving the state time to study the impact of large data centers on the power grid, utilities, land use and the environment, amid growing concern over their electricity and water demands. The move comes as major tech companies expand AI-related data center projects across the US, with communities also raising complaints about noise and light pollution. If signed into law, Maine could become the first state to impose such a pause, potentially influencing how other states respond to the rapid growth of AI infrastructure.

Chinese Scientific Groups Issue Initiative Calling for Open and Fair Global AI Governance System

Multiple Chinese scientific groups have issued a joint initiative calling for an open, fair, inclusive and effective global AI governance system, according to Xinhua. The document, backed by 16 societies affiliated with the China Association for Science and Technology, says AI development should improve human well-being while keeping security as a basic requirement for research and regulation. It also calls for equal participation by all countries in AI research and governance, while opposing technological hegemony, academic barriers and unreasonable monopolies. The initiative further urges stronger international cooperation, more public education on AI risks and benefits, and practical steps to advance the idea of “AI for good.”

LinkedIn Data Shows Hiring Down 20% Since 2022, but AI Not Yet a Factor

LinkedIn says its data shows hiring has fallen about 20% since 2022, but the company does not see clear evidence that AI is driving the slowdown so far. A top LinkedIn executive said the platform’s labor-market data, drawn from more than a billion members, points instead to higher interest rates as a more likely reason for weaker hiring. The company also said it has not seen bigger declines in AI-exposed fields such as customer support, administration, and marketing, or among young adults entering the workforce. Still, LinkedIn warned that AI could reshape work over time, estimating that the skills needed for the average job may change 70% by 2030.

🚀 AI Breakthroughs

Anthropic Releases Claude Opus 4.7 as Generally Available Model Amid Mythos Preview Buzz

Anthropic has released Claude Opus 4.7, its most powerful generally available model so far, with improvements in advanced software engineering, image analysis, instruction-following, and creative document generation. The release follows the recent debut of Mythos Preview, a cybersecurity-focused model that Anthropic says outperforms Opus 4.7 on all key evaluations but remains limited to select partners including Nvidia, JPMorgan Chase, Google, Apple, and Microsoft. Anthropic said Opus 4.7 includes added cybersecurity safeguards and was used to test protections before any broader release of Mythos-class models. The company also launched a Cyber Verification Program for security professionals seeking broader cybersecurity use, while keeping Opus 4.7 pricing unchanged at $5 per million input tokens and $25 per million output tokens.

Anthropic Launches Claude Design for Rapid Visual Creation, Prototyping, and Team Design System Workflows

Anthropic has launched Claude Design, an experimental tool that uses Claude to help users quickly create visuals such as prototypes, slide decks, and one-pagers by describing what they want in plain language. Aimed at founders, product managers, and others without formal design skills, the product also lets users refine outputs through edits or follow-up requests and can apply a company’s design system by reading its codebase and design files. Anthropic said the tool is meant to complement platforms like Canva rather than replace them, with exports available as PDFs, URLs, PPTX files, or editable Canva projects. Powered by Claude Opus 4.7, Claude Design is available in research preview for Claude Pro, Max, Team, and Enterprise subscribers, underscoring Anthropic’s broader push into enterprise and workplace AI tools.

Science Corp. Prepares First Human Brain Sensor Trial for Biohybrid Brain-Computer Interface in U.S.

Science Corp., the brain-computer interface startup founded by former Neuralink president Max Hodak, is preparing for its first U.S. human trial of a brain sensor and has added Yale neurosurgery chair Dr. Murat Günel as a scientific adviser. The company’s longer-term goal is a biohybrid interface that combines electronics with lab-grown neurons, but the first trial would test a sensor without neurons by placing it on the brain’s surface during major surgeries. Science says the approach may reduce tissue damage compared with implants inserted into brain tissue, and it is in discussions with ethics boards as it develops prototypes and clinical plans. The company recently raised $230 million at a $1.5 billion valuation, while its earliest human trial for the sensor is considered unlikely before 2027.

Parasail is betting tokenmaxxing will create the next compute giant

Parasail, a startup focused on AI inference infrastructure, has raised a $32 million Series A as it bets rising demand for cheap, fast token generation will fuel the next major compute business. The company says it processes 500 billion tokens a day by routing workloads across 40 data centers in 15 countries and tapping external compute markets, rather than relying mainly on its own chips. Its strategy is built around the idea that startups increasingly want lower-cost alternatives to frontier model APIs, especially as open-source models and AI agents drive up the number of inference requests. Investors backing the round argue that inference will become a major share of future software costs, positioning Parasail to compete with larger cloud providers and inference-focused rivals such as Fireworks AI and Baseten.

OpenAI Updates Agents SDK With Sandboxing and Harness Tools for Safer Enterprise AI Agents

OpenAI has updated its Agents SDK with new sandboxing and harness features aimed at helping enterprises build safer and more capable AI agents using its models. The sandbox lets agents operate in controlled environments with limited access to files, code, and tools, reducing the risks of unsupervised behavior. OpenAI said the new in-distribution harness is designed to support more advanced, long-horizon tasks by allowing agents to work within approved workspace resources and infrastructure. The features are available to all API customers at standard pricing, launching first in Python, with TypeScript support planned later.

Canva Expands AI Assistant With Tool-Using Design Automation, Integrations, Scheduling, and Editable Layered Outputs

Canva has updated its AI assistant so users can describe a design task in plain language and have the system automatically choose the right tools to generate editable design options with layered elements. The company is also adding integrations with Slack, Gmail, Google Drive, Calendar, Zoom, and a web research feature so the assistant can pull in context from files, messages, meetings, and online sources. New scheduling tools let users set repeatable tasks that run in the background, while other upgrades include HTML import for AI code generation and text-prompted spreadsheet creation. Canva said the update is part of a broader push to make its AI assistant central to creative workflows, as rivals such as Adobe and Figma also expand agentic AI features. Canva AI 2.0 is rolling out in research preview this week, with wider availability planned in the coming weeks.

Google Blocks 8.3 Billion Ads in 2025 as Gemini AI Shifts Enforcement Strategy

Google said it blocked a record 8.3 billion ads globally in 2025, up from 5.1 billion a year earlier, while suspending fewer advertiser accounts, signaling a shift toward stopping bad ads rather than broadly banning bad actors. The company said its AI systems, including Gemini models, caught more than 99% of policy-violating ads before users saw them and helped detect scam campaigns created at scale with generative AI. Google’s 2025 Ads Safety Report said 602 million blocked ads and 4 million suspended accounts were tied to scams. In the U.S., Google removed more than 1.7 billion ads and suspended 3.3 million accounts, while in India it blocked 483.7 million ads but account suspensions fell to 1.7 million from 2.9 million.

Google Adds AI Skills to Chrome for Saving and Reusing Favorite Gemini Workflows Across Web Pages

Google is adding a new Chrome feature called Skills that lets users save and reuse their favorite Gemini AI prompts across different web pages, reducing the need to type the same instructions repeatedly. The tool builds on Gemini’s existing ability in Chrome to summarize pages, answer questions, and perform tasks, and saved prompts can be accessed through chat history, a slash command, or the plus button. Google said early testing showed people used Skills for tasks such as recipe adjustments, shopping comparisons, health tracking, and document summaries, while a built-in Skills library will offer ready-made workflows that can also be edited. The feature is rolling out to signed-in Chrome desktop users starting Tuesday and will initially be available only when the browser language is set to English (US).

Google Adds Side-by-Side Web Browsing and Multi-Tab Context Search to AI Mode in Chrome

Google has started rolling out new AI Mode features in Chrome desktop that let users open web pages side-by-side with its conversational search tool, making it easier to compare information and ask follow-up questions without losing search context. The feature can use details from the opened page along with information from across the web to answer more specific queries. The company has also added a way to include recently opened Chrome tabs, images, and files in AI Mode searches on desktop and mobile. These updates are now available in the U.S., with a wider regional expansion planned later.

Luma launches AI production studio with Wonder Project for faith-focused and family film projects

Luma has launched Innovative Dreams, a new AI-powered production company in partnership with Wonder Project, the faith and family entertainment studio behind titles on Amazon Prime Video. Their first project, “The Old Stories: Moses,” starring Ben Kingsley, is set to debut this spring on Prime Video. The venture will use Luma’s AI tools and “real-time hybrid filmmaking” to let creative teams adjust sets, props, lighting, and actor footage during production rather than in post-production. While the partnership builds on Wonder Project’s faith-focused roots, the companies said Innovative Dreams will also work on projects across other genres and with other studios.

🎓AI Academia

Study Examines Explainability Gaps and Governance Risks as Enterprises Scale Agentic AI Systems

A new paper examines the growing challenge of “agent sprawl” as companies adopt agentic AI at scale, especially through low-code tools that let employees build autonomous systems faster than governance can keep up. It argues that traditional explainability methods are no longer enough for multi-agent environments, where the bigger need is auditability, or tracking how agents make decisions, communicate, and access tools and data. The paper says enterprise AI teams are increasingly worried about weak monitoring, duplicated agents, hidden dependencies, and the risk of lower-clearance agents reaching sensitive information through complex chains of interaction. To address this, it outlines design-time and runtime explainability methods and proposes an early “Agentic AI Card” prototype aimed at improving oversight, accountability, and trust in large-scale enterprise deployments.

Study Proposes Machine-Readable AI Compliance Evidence Framework Using OSCAL for EU High-Risk Systems

A new preprint argues that AI compliance still lacks the technical plumbing needed to turn policy rules into audit-ready, machine-readable evidence. The paper says frameworks such as the EU AI Act, ISO/IEC 42001, and the NIST AI Risk Management Framework describe what organizations must prove, but not how to generate that proof in a standardized, executable format. To address that gap, it adapts NIST’s OSCAL cybersecurity compliance standard for AI, adds 16 extensions for lifecycle and risk tracking, and proposes a three-layer “compliance-as-code” setup that captures evidence during model training. The approach was tested on two high-risk AI use cases covered by the EU AI Act—a credit scoring system and a medical imaging segmentation model—and the reference implementation has been released as open-source software under the Apache 2.0 license.

AISafetyBenchExplorer Study Finds AI Safety Benchmarks Fragmented, Metrics Inconsistent, and Governance Weak Across Ecosystem

A new paper presents AISafetyBenchExplorer, a catalogue of 195 AI safety benchmarks released between 2018 and 2026, and argues that the biggest problem in LLM safety testing is not too few benchmarks but poor consistency in how they are measured and maintained. The study finds the field is heavily skewed toward English-only benchmarks (165 of 195), evaluation-only resources (170 of 195), and aging repositories, with 137 GitHub repos and 96 Hugging Face datasets marked as stale. It also says common metric labels such as accuracy, F1, safety score, and overall benchmark score often hide major differences in judging methods, aggregation rules, and threat models, making comparisons unreliable. The paper describes the benchmark ecosystem as fragmented and weakly governed, with benchmark growth outpacing standardization, post-publication upkeep, and clear rules for selecting the right benchmarks for safety claims.

Oxford Study Says Deepfakes Wrongfully Undermine Personal Authority Over Image Use and Identity Governance

A forthcoming paper in AI & Society argues that deepfakes are not only harmful because of the damage they can cause, but also because they can violate a person’s rightful control over how their image and identity are used. The paper says deepfakes become wrongful when they exploit someone’s biometric features to simulate actions or expressions without consent, effectively taking over the source of that person’s apparent agency. It describes this as an “algorithmic conscription” of identity, where a person’s likeness is used as raw material for synthetic content. The article also draws a line between acceptable uses, such as artistic depiction, and wrongful AI-generated simulation that falsely presents a person’s image as if it came from them.

Survey of 457 Researchers Finds Generative AI Reshaping Software Engineering Research Practices and Governance

A new survey of 457 software engineering researchers found that generative AI is now widely used across the field, especially for writing, brainstorming, and other early-stage research tasks, while core methodological and analytical work is still mostly handled by humans. The study says many researchers feel growing pressure to use GenAI and align their work with the trend, even as questions remain about trust, accuracy, bias, and unclear rules. Respondents broadly reported productivity benefits, but also warned that AI-generated errors and weak transparency could affect research quality. The paper concludes that stronger human oversight, verification practices, and clearer guidance for responsible use and peer review are needed as GenAI becomes more embedded in academic research.

Preprint Details PRISM Framework for Hierarchy-Based AI Behavioral Risk Signals and Red Line Detection

A new April 2026 preprint describes the PRISM Risk Signal Framework, a method for spotting dangerous AI behavior by looking at how models rank values, weigh evidence, and trust sources, rather than only checking specific harmful prompts or outputs. The paper defines 27 behavioral risk signals and says they can be classified as confirmed risks or watch signals using a dual-threshold scoring method. It reports results from about 397,000 forced-choice responses across seven AI models, showing the framework can separate models with extreme, context-dependent, or more balanced reasoning profiles. The study argues that this hierarchy-based approach could help regulators and safety teams detect risks earlier and add a behavioral layer to existing rules such as the EU AI Act.

Preprint Proposes AI Integrity Framework for Verifiable Governance Through Auditable Reasoning and Authority Stack

An April 2026 preprint proposes “AI Integrity” as a new AI governance model focused on checking how an AI system reaches a decision, not just whether the final result looks safe, ethical, or aligned. The paper says current approaches such as AI ethics, safety, and alignment mostly judge outcomes, while AI Integrity examines an AI system’s “Authority Stack” — the values, evidence standards, source choices, and data filters shaping its reasoning. It outlines a four-layer framework and warns that manipulation or “pollution” at any layer can distort outputs, with “Integrity Hallucination” described as a key measurable risk to value consistency. The study also presents a measurement framework called PRISM, aimed at making AI reasoning paths more transparent, auditable, and empirically testable in high-stakes areas like healthcare, law, defense, and education.

Study Proposes AI Identification Framework for Sustainable Enterprise Governance, Traceability, and Regulatory Accountability

A new academic paper argues that as AI systems become more powerful and embedded in critical infrastructure, they need verifiable identities to support regulation, audits, and long-term digital governance. The proposed framework combines model fingerprinting, cryptographic hashes, blockchain-based registration, zero-knowledge proofs, and post-deployment monitoring to track an AI system across its lifecycle without exposing sensitive internals. It also suggests a dual ID system with one machine-readable identifier and one human-readable code, stored in a tamper-resistant registry. The paper says this could help enterprises and regulators improve accountability, traceability, and policy enforcement as AI adoption expands.

Study Maps GPT-3 to GPT-5 Capabilities, Limitations, Deployment Shifts, and Real-World Consequences

A new comparative study traces the GPT family from GPT-3 through GPT-5, arguing that these systems have evolved far beyond simple text generators into multimodal, tool-using, long-context AI systems embedded in broader workflows and products. The paper says direct model-to-model comparisons have become harder because performance now depends not just on the model itself, but also on routing, safety tuning, interface design, and access to external tools. It finds that major weaknesses have persisted across generations, including hallucinations, prompt sensitivity, fragile benchmark results, uneven performance across domains and user groups, and limited public transparency about training and architecture. At the same time, the study says newer GPT models are having wider real-world effects on software development, education, information work, and interface design, raising broader governance and deployment questions.

About SoRAI: SoRAI is committed to advancing AI literacy through practical, accessible, and high-quality education. Our programs emphasize responsible AI use, equipping learners with the skills to anticipate and mitigate risks effectively. Our flagship AIGP certification courses, built on real-world experience, drive AI governance education with innovative, human-centric approaches, laying the foundation for quantifying AI governance literacy. Subscribe to our free newsletter to stay ahead of the AI Governance curve.