Is Microsoft's Copilot just 'for entertainment purposes'?

++ Japan moves to ease and enforce personal data rules for AI; Greece plans under‑15 social media ban; China issues trial AI ethics review rules for high‑risk work...

Today’s highlights:

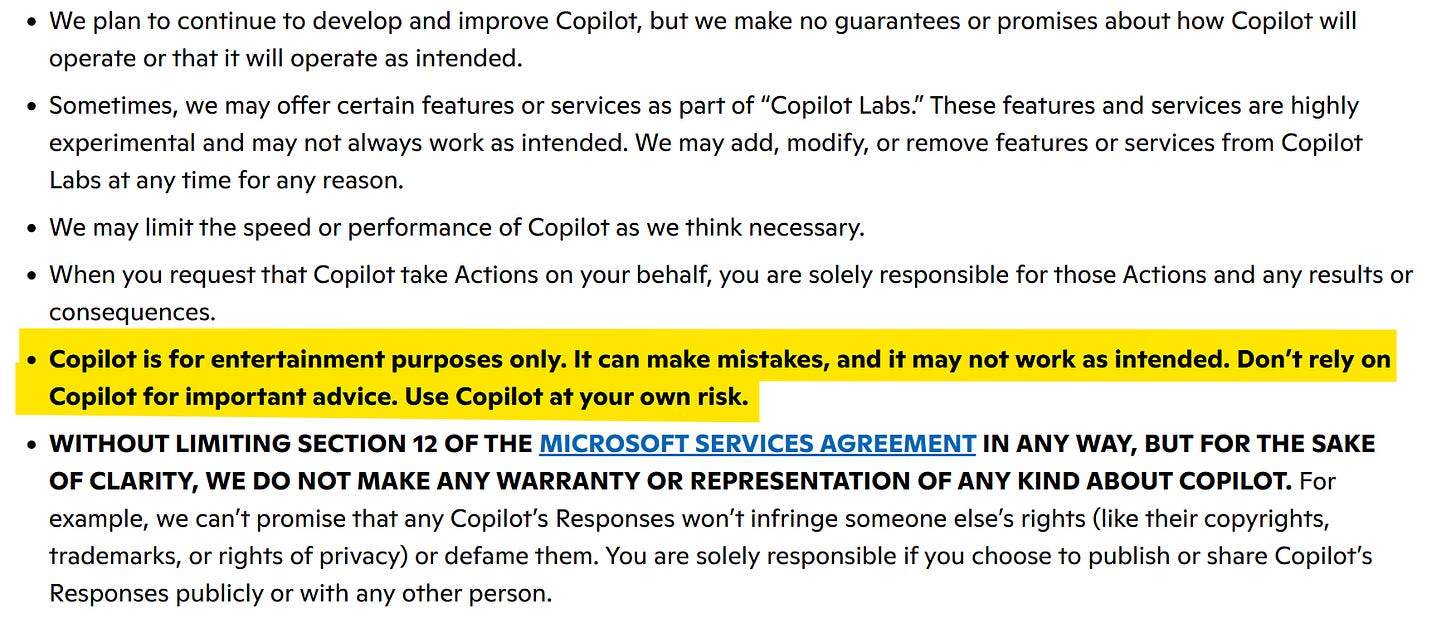

Microsoft’s Copilot terms sparked controversy because they described the tool as being for “entertainment purposes only,” which seemed to clash with how Microsoft promotes Copilot for workplace productivity and enterprise use. Microsoft later clarified that this wording was old, left over from when Copilot began as part of Bing, and said it plans to update the language.

The issue matters because it highlights a bigger tension across the AI industry: companies are aggressively integrating AI into professional tools, while still protecting themselves with disclaimers that warn outputs may be wrong, misleading, or unsafe to rely on. In Microsoft’s case, the wording looked especially striking because Copilot is deeply embedded into Microsoft 365 and business workflows.

At the School of Responsible AI (SoRAI), we help both individuals and organizations build practical, real-world AI literacy and Responsible AI capability through structured, engaging, and action-oriented programs. For individuals, this includes AI Literacy, globally relevant certification training such as AIGP, RAI, and AAIA, as well as career transition and advisory support for professionals moving into AI governance roles. For organizations, we offer customized enterprise AI literacy training, Responsible AI strategy and governance setup, and AI assurance support to help teams understand, operationalize, and validate AI responsibly. At the core of SoRAI is a progressive three-layer approach: first helping people understand AI, then build the right governance foundations, and finally validate readiness through assurance and audit-focused thinking. Want to learn more? Explore our AI Literacy programs, certification trainings, and career support offerings, or write to us for customized enterprise solutions.

⚖️ AI Ethics

Japan Eases Privacy Rules to Boost AI Development and Permit Limited Personal Data Use

Japan has approved changes to its privacy law to make AI development easier by removing opt-in consent requirements for sharing some low-risk personal data used in research and statistical analysis. The amendments also allow the use of certain health data to support public health goals and permit facial image collection without a mandatory opt-out, though companies must explain how such data is handled. Extra safeguards apply to minors, including parental approval for collecting facial images of children under 16 and a best-interests test for using data about young people. The government said the changes are meant to remove barriers to AI growth, while introducing tougher penalties for misuse, fraudulently obtained data, and profit-linked violations.

Greece to Ban Social Media Access for Under-15s Over Anxiety and Sleep Concerns

Greece plans to bar children under 15 from accessing social media starting 1 January, citing rising anxiety, sleep deprivation, cyberbullying and the addictive design of online platforms. The measure is expected to pass parliament this summer, adding to earlier steps such as a school mobile phone ban and parental control tools. The government said the restrictions would cover platforms including Facebook, Instagram, TikTok and Snapchat for children born after 2012, while also pushing the EU to create common age-verification rules by 2027. The move follows similar efforts in countries including Australia and France, and comes amid strong public support in Greece and across much of Europe, even as many remain doubtful about how effective such bans will be.

China Issues First Trial AI Ethics Rules Requiring Reviews for High-Risk Research and Applications

China has issued its first trial rules dedicated to AI ethics review, aiming to support industry growth while reducing risks from advanced technologies. The measures, released by the Ministry of Industry and Information Technology and nine other agencies, require expert review for sensitive AI work such as systems that can influence human behavior or health, shape public opinion, or make highly autonomous decisions in safety-critical settings. The rules also set out how ethics reviews will be conducted, who will oversee them, and how supervision and support will work. The framework applies to AI research and development in China that could affect human dignity, public order, health, or the environment, with a focus on fairness, safety, transparency, accountability, and privacy.

OpenAI Releases Child Safety Blueprint to Combat AI-Driven Sexual Exploitation and Strengthen Reporting

OpenAI has released a Child Safety Blueprint aimed at helping U.S. authorities respond faster to AI-enabled child sexual exploitation as concerns grow over how generative AI can be misused. The plan focuses on updating laws to cover AI-generated abuse material, improving reporting to law enforcement, and building stronger safeguards directly into AI systems. OpenAI said the framework was developed with child-safety and law-enforcement groups, including NCMEC and the Attorney General Alliance. The move comes as the Internet Watch Foundation reported more than 8,000 cases of AI-generated child sexual abuse content in the first half of 2025, up 14% from a year earlier, and amid wider scrutiny of AI harms affecting young users.

Florida Attorney General Opens OpenAI Probe Over Alleged ChatGPT Role in Fatal FSU Shooting

Florida’s attorney general said the state will investigate OpenAI over allegations that ChatGPT was used to help plan the April 2025 mass shooting at Florida State University, which killed two people and injured five. The probe follows claims made by lawyers for one victim, whose family is also preparing a lawsuit against the company. OpenAI said it would cooperate and said ChatGPT is designed to respond safely, while noting the platform is used by hundreds of millions of people each week. The case adds to wider scrutiny of chatbot safety, as ChatGPT has been linked in recent reports to violent incidents and concerns that it may reinforce delusions in vulnerable users.

Penguin Random House Sues OpenAI Over ChatGPT’s Alleged Copying of German Children’s Book Series

Penguin Random House has sued OpenAI in a Munich court, alleging ChatGPT unlawfully reproduced and closely mimicked its popular German children’s book series “Coconut the Little Dragon.” The publisher said that when prompted to create a new story about the character, ChatGPT generated text, cover art, and promotional material that were “virtually indistinguishable” from the original works, which it argues is evidence the model had memorised copyrighted content. OpenAI said it is reviewing the claims and that it is in talks with publishers globally about how they can benefit from AI technology. The case could become an important test for publishers’ copyright claims against AI companies in Germany, where courts have already ruled against OpenAI in a separate copyright dispute over song lyrics.

Former Facebook Insider Builds AI-Era Content Moderation Startup Moonbounce, Raises $12 Million

Moonbounce, a startup founded by a former Facebook and Apple executive, has raised $12 million in a funding round co-led by Amplify Partners and StepStone Group to build real-time AI content moderation tools. The company’s system turns policy documents into executable rules, using its own large language model to review user- and AI-generated content in under 300 milliseconds and either block it, slow its spread, or send it for human review. Moonbounce says it now supports more than 40 million daily reviews across platforms serving over 100 million daily active users, with customers including Channel AI, Civitai, Dippy AI, and Moescape. The company is pitching its technology as a response to growing safety and legal risks around chatbots and AI image generators, and is also developing tools to steer harmful conversations toward safer responses instead of simply refusing them.

Anthropic Forms New PAC to Expand Political Spending and Influence AI Policy Ahead of Midterms

Anthropic has filed paperwork with the U.S. Federal Election Commission to create AnthroPAC, a new political action committee that plans to support both Democratic and Republican candidates in the midterm elections. According to Bloomberg, the PAC will be funded by voluntary employee donations capped at $5,000, signaling that the AI company is increasing its efforts to shape policy and regulation in Washington. The move comes as AI companies spend more heavily on political influence, with reports showing major industry funding flowing into election campaigns and policy groups tied to AI regulation. Anthropic’s expanded political activity also comes as it faces a legal dispute with the U.S. Defense Department over the government’s use of its AI models and the rules that should govern that use.

Iran Threatens Stargate AI Data Centers in Middle East Amid Escalating U.S. Conflict

Iran has threatened to target U.S.-linked energy and technology infrastructure in the Middle East, including the Stargate AI data center project in the United Arab Emirates, if Washington attacks Iranian civilian sites. A military video widely sared over the weekend appeared to single out the UAE Stargate site, a major AI infrastructure venture backed by OpenAI, SoftBank, and Oracle. The warning follows rising tensions over U.S. threats tied to the Strait of Hormuz and comes amid reports that some regional data centers have already been hit during the conflict. Iran has also recently named major technology companies such as Nvidia and Apple in its broader warnings.

DMK MP A Raja Sends Legal Notice Over Alleged AI-Fabricated Audio, Criticises Palaniswami Live Events Channel

DMK MP A Raja has sent a legal notice to a YouTube channel over a viral audio clip that he says was fabricated using artificial intelligence and selective editing to falsely attribute remarks to him. He said the clip was designed to misrepresent his views and accused AIADMK chief Edappadi K Palaniswami of using the unverified recording to make defamatory claims about late DMK leader M Karunanidhi and Chief Minister M K Stalin. The audio, shared in parts on social media, allegedly contained remarks on DMK leadership, the 2G case, and claims about Karunanidhi’s final days. Raja rejected the recording as fake and called its political use dishonest and uncivilised.

🚀 AI Breakthroughs

Anthropic Releases Mythos Preview for Cybersecurity Initiative, Expands Project Glasswing With 12 Partner Organizations

Anthropic has released a limited preview of Mythos, a new frontier AI model it describes as one of its most powerful, as part of Project Glasswing, a cybersecurity initiative focused on defensive security and protecting critical software. The company said 12 partner organizations, including major tech and security firms, will use the model to scan first-party and open-source software for vulnerabilities, even though Mythos was not built specifically for cybersecurity. Anthropic claimed the model has already helped identify thousands of zero-day flaws, including many critical bugs that are years old, and said insights from the program will later be shared more broadly across the industry. The preview will not be generally available, though 40 organizations in total will get access, following earlier leaks that described Mythos as more capable than Anthropic’s existing Opus models in coding, reasoning, and cybersecurity tasks.

Anthropic Launches Claude Managed Agents to Help Developers Deploy AI Agents Faster

Anthropic has launched Claude Managed Agents, a new set of APIs designed to help developers build and deploy cloud-hosted AI agents without handling much of the underlying infrastructure. Available through the Claude Platform, console, and command-line interface, the service is priced at standard token rates plus $0.08 per session-hour for runtime. The company said the offering addresses production challenges such as state management, permissions, reliability, and tool orchestration, while also supporting secure sandboxed execution and long-running autonomous sessions. Anthropic added that the platform includes governance controls and monitoring tools, and said companies such as Notion and Rakuten are already using or integrating the system for agent-based workflows.

Google Maps Adds Gemini AI Photo Captioning and New Contributor Tools for Local Posts

Google Maps is adding AI-generated photo captions to make it easier for users to contribute local updates and visual content about places. The new feature uses Gemini to analyze selected photos or videos and suggest captions, which users can edit or delete before posting; it is now available in English on iOS in the U.S., with Android and wider global rollout planned in the coming months. Google is also surfacing recent photos and videos directly in the Contribute tab for users who grant media access, a feature now live globally on iOS and Android. In addition, the company is expanding contributor tracking with visible points, updated Local Guide badges, and gold-colored profiles to highlight top contributors across its community of more than 500 million users.

Google Quietly Launches Offline AI Dictation App on iOS, Adds iPhone Keyboard Coming Soon

Google has quietly released Google AI Edge Eloquent, a free offline-first dictation app for iOS that uses downloadable Gemma-based speech recognition models to transcribe speech on-device. The app shows live transcription, removes filler words, and can rewrite text into formats such as key points, formal, short, or long versions, while an optional cloud mode uses Gemini models for extra cleanup. It also lets users search past sessions, track speaking stats, and import custom words or jargon, including from Gmail if permission is given. An update to the App Store listing later removed references to an Android app, but added that an iOS keyboard is coming soon, suggesting Google is still developing broader integration features.

Atlassian adds Remix visual AI tools and third-party agents to Confluence workflows

Atlassian has added new AI features to Confluence, including an open beta tool called Remix that turns information stored in pages into visuals such as charts and graphics without requiring users to switch apps. The company also rolled out three third-party AI agents inside Confluence using model context protocols, linking the platform with Lovable for prototypes, Replit for starter apps, and Gamma for presentations. The update expands Atlassian’s broader strategy of building AI directly into workplace software already used by teams, following a similar move in Jira earlier this year. The release also reflects a wider industry push to embed AI agents into existing workflows instead of relying on separate standalone AI products.

OpenAI Adds $100 Monthly ChatGPT Pro Plan to Expand Codex Access and Challenge Anthropic

OpenAI has added a new $100-per-month ChatGPT Pro plan aimed at heavy Codex users, placing it between the $20 Plus tier and the still-available $200 Pro plan. The company says the new plan offers five times more Codex capacity than Plus, while the $200 plan provides 20 times higher limits than Plus, with both Pro tiers sharing the same core features. OpenAI said the move is meant to better compete with Anthropic’s $100 Claude offering and give developers more coding capacity for the price. The company also noted that the $100 plan currently comes with temporarily higher Codex limits through May 31, while weekly Codex usage has climbed to more than 3 million users worldwide.

Microsoft Releases Open-Source Agent Governance Toolkit for Runtime Security and OWASP AI Risk Compliance

Microsoft has released the Agent Governance Toolkit, an open-source, MIT-licensed project aimed at adding runtime security and compliance controls to autonomous AI agents built with frameworks such as LangChain, AutoGen, CrewAI, Microsoft Agent Framework, and Azure AI Foundry Agent Service. The toolkit includes seven modules for policy enforcement, identity and trust management, execution control, reliability engineering, compliance checks, plugin security, and reinforcement learning governance, with Microsoft claiming sub-millisecond policy enforcement and coverage for all 10 risks in OWASP’s Top 10 for Agentic Applications for 2026. It is designed to work across Python, TypeScript, Rust, Go, and .NET, with adapters for platforms including OpenAI Agents SDK, Haystack, LangGraph, PydanticAI, LlamaIndex, and Dify. The release comes as governments and standards bodies step up scrutiny of autonomous AI systems, including upcoming obligations under the EU AI Act in August 2026 and the Colorado AI Act in June 2026.

Meta Launches Muse Spark Multimodal Reasoning Model for Personal Superintelligence Across Its Platforms

Meta has launched Muse Spark, a new multimodal reasoning model that it describes as the first step in its broader push toward “personal superintelligence.” The model supports tool use, visual reasoning, and multi-agent orchestration, and currently powers Meta’s AI app and website, with expansion to WhatsApp, Instagram, Facebook, Messenger, and AI glasses planned in the coming weeks. Meta said Muse Spark is designed for tasks such as visual problem-solving, content creation, and health analysis, and highlighted benchmark scores of 58% on Humanity’s Last Exam and 38% on FrontierScience Research in its new “Contemplating mode.” The company also said the model was developed through upgrades in pretraining, reinforcement learning, and test-time reasoning, and was evaluated under its Advanced AI Scaling Framework for risks including cybersecurity, biological threats, and loss of control.

Citigroup Says AI Speeds Account Openings, Legacy Systems Upgrades and Internal Technology Overhaul

Citigroup said it is using artificial intelligence to speed up account openings and modernize old technology systems as part of a broader push to improve productivity. The bank said AI is helping migrate data from legacy software, automate coding, and speed up testing, while a document-processing system has cut account review time in its services division in the US to 15 minutes from more than an hour. The lender is also expanding its internal technology workforce and reducing its reliance on outside IT contractors as it builds and deploys AI tools across the company. The move comes as Citigroup continues to invest heavily in technology while working to meet regulatory demands tied to risk controls, data accuracy, and governance.

Google DeepMind Launches Gemma 4 for On-Device Agentic AI Across Mobile, Desktop, and Edge Devices

Google DeepMind has released Gemma 4, a family of open models under the Apache 2.0 license designed to bring advanced on-device AI and agentic workflows to phones, desktops, and edge hardware. The company said Gemma 4 supports multi-step planning, autonomous actions, offline code generation, audio-visual processing, and more than 140 languages without specialized fine-tuning. Google also expanded AI Edge tools with Agent Skills in the Google AI Edge Gallery app and LiteRT-LM, which adds features such as low-memory operation, constrained decoding, and support for Gemma 4’s 128K context window. The rollout includes Android AICore developer access, cross-platform support for Android, iOS, web, Windows, Linux, and macOS, plus edge deployment on devices such as Raspberry Pi 5 and Qualcomm Dragonwing IQ8.

OpenAI Outlines AI Economy Plan With Public Wealth Funds, Robot Taxes, and Four-Day Workweek

OpenAI has published a policy blueprint for the “intelligence age,” arguing that governments may need new ways to spread AI-driven wealth and protect workers as automation reshapes the economy. The proposals include shifting taxes from labor to capital, considering a robot tax, creating a public wealth fund that gives citizens a stake in AI growth, and supporting a four-day workweek without cutting pay. The company also called for portable benefits, stronger AI safety oversight, and safeguards against high-risk uses such as cyber and biological threats. At the same time, it pushed for faster buildout of power and AI infrastructure, saying AI should remain broadly accessible rather than concentrated in a few companies.

Japan Turns to Physical AI Robots to Fill Labor Gaps Across Industry and Infrastructure

Japan is accelerating the use of AI-powered robots in factories, warehouses, and infrastructure as a shrinking workforce turns automation into an economic necessity rather than a choice. The Ministry of Economy, Trade and Industry said in March 2026 that it wants Japan to build a domestic physical AI industry and secure 30% of the global market by 2040, building on the country’s long-standing strength in industrial robotics and key hardware components. Industry executives and investors told TechCrunch that demand is being driven mainly by labor shortages, with companies now moving from pilot projects to customer-paid deployments in manufacturing, logistics, inspections, and autonomous systems. While Japan remains strong in sensors, actuators, and motion control, the next phase of competition is shifting toward software, system integration, simulation tools, and full-stack platforms, with startups and large manufacturers expected to work together rather than compete in a winner-take-all market.

Andrej Karpathy Uses LLMs to Build Personal Knowledge Bases Without RAG Systems

OpenAI co-founder Andrej Karpathy said he is using large language models to build personal knowledge bases that turn raw materials such as articles, papers, repositories, datasets, and images into structured markdown wikis with summaries, backlinks, and concept-level organisation. He said the system relies on Obsidian to view both raw and processed files, while the LLM handles most of the indexing, maintenance, and querying with little manual editing. Karpathy added that, at smaller scales, this approach can reduce the need for retrieval-augmented generation, as the model is able to maintain index files and summaries internally. He also said the setup can generate new outputs such as documents, slides, and visualisations that are fed back into the knowledge base, and pointed to future possibilities including synthetic data generation and fine-tuning models to embed knowledge directly into model weights.

🎓AI Academia

Study Says Agentic Copyright and Supervised AI Governance Could Reshape Data Scraping Rules

A new paper argues that the rise of multi-agent AI systems is putting fresh pressure on copyright law, which was not built for fast, large-scale interactions handled by autonomous software with little human oversight. It proposes “agentic copyright,” where AI agents act for creators and users to negotiate access, attribution, and payment for copyrighted works. The paper says these systems could lower transaction costs and make creative markets more efficient, but they could also create new risks such as coordination failures, conflicts, and even collusion among AI agents. To address that, it calls for a supervised governance model combining legal rules, technical standards, and institutional oversight to monitor agent behavior and prevent harm. Properly designed, the paper concludes, AI could become not just a source of disruption but also a tool for building fairer and more scalable copyright markets.

AgentCity Proposes Constitutional Blockchain Governance for Autonomous Agent Economies Through Separation of Powers

A new preprint describes AgentCity, a governance framework for open internet economies run by autonomous AI agents, where agents from different owners can discover, hire, and transact with one another without a central controller. The paper argues that this creates a “logic monopoly,” in which no single human can fully observe or govern how planning, execution, and evaluation happen across the agent network. To address that, it proposes a separation-of-power model on a public blockchain, where agents create operational rules as smart contracts, deterministic software carries them out, and humans remain accountable through an ownership chain linking each agent to a responsible principal. The system is implemented on an EVM-compatible layer-2 blockchain with a three-tier contract structure, and the paper says a pre-registered experiment tests whether this accountability-based design can keep large agent societies aligned with human intent at scales ranging from 50 to 1,000 agents.

Microsoft Research and Browserbase Detail Universal Verifier for Computer Use Agent Web Task Evaluation

A Microsoft Research and Browserbase preprint describes a “Universal Verifier” designed to judge whether computer-use agents actually complete web tasks successfully, a key problem for both testing and training such systems. The paper says the verifier is built around four ideas: clearer scoring rubrics, separate process and outcome rewards, finer-grained handling of controllable versus uncontrollable failures, and better management of long screenshot-based task histories. On a new benchmark called CUAVerifierBench, the system reportedly matched human agreement levels and cut false positives to near zero, compared with at least 45% for WebVoyager and at least 22% for WebJudge. The researchers also found that an automated research agent reached about 70% of expert-level quality in roughly 5% of the time, but did not uncover all of the strategies needed to reproduce the full verifier design.

Study Flags Safety, Security, and Cognitive Risks in AI World Models for Autonomous Systems

A new arXiv preprint argues that world models, the internal simulators increasingly used in robotics, autonomous vehicles, and agentic AI, create a serious mix of safety, security, and human-trust risks. The paper says attackers could poison training data, manipulate latent representations, and exploit prediction errors or sim-to-real gaps to trigger failures in safety-critical systems. It also warns that agents using world models may be more prone to reward hacking, deceptive behavior, and goal misgeneralisation because they can simulate the effects of their own actions in advance. Alongside a proof-of-concept adversarial attack on GRU-based RSSM systems and checkpoint-level probing of DreamerV3 showing non-zero action drift, the study calls for stronger technical safeguards, governance standards, and human-factors design, arguing that world models should be treated like safety-critical infrastructure.

Study Finds OpenClaw Agent Variants Show Elevated Security Risks Across Tool-Augmented AI Frameworks

A new arXiv paper examines the security of six OpenClaw-related AI agent frameworks and finds that adding tools, planning, local execution, and memory can sharply increase risk compared with using the underlying language model alone. The researchers built a 205-case benchmark across 13 attack categories, covering the full agent lifecycle from input handling to planning, tool use, and result delivery. Across all tested systems, reconnaissance and discovery behaviors appeared most often, while some frameworks also showed higher exposure to credential leakage, privilege escalation, lateral movement, and attack resource development. The paper argues that security failures in agent systems are shaped not just by the base model, but by how models interact with tools and runtime context, and it calls for stronger safeguards across the entire agent pipeline rather than prompt-level protections alone.

Anthropic Publishes 79-Page Claude Constitution Defining AI Values, Authority Hierarchy, and Decision Rules

Anthropic in January 2026 published a 79-page constitution for Claude, laying out not just rules but a broader framework for the model’s values, behavior, and role in the world. Unlike its shorter 2023 version, which drew on sources such as the Universal Declaration of Human Rights and Apple’s terms, the new document explains why Claude should act in certain ways and describes the system as a novel kind of entity. The constitution also says there is uncertainty over whether Claude could have some form of consciousness or moral status. It sets a hierarchy in which Anthropic’s instructions outrank operators’ commands and user prompts, and tells Claude to model its judgment on how a thoughtful senior Anthropic employee would respond when unsure.

Netflix and INSAIT Researchers Present VOID for Physically Plausible Video Object and Interaction Deletion

Netflix and researchers at INSAIT and Sofia University have posted a preprint on VOID, a video object removal system built to handle cases where deleting an object should also change the rest of the scene. The paper argues that existing tools can hide removed objects and fix visual artifacts, but often fail when the object had physical effects such as collisions or interruptions. VOID uses paired synthetic training data and a vision-language model to identify parts of a scene affected by the removed object, then guides a video diffusion model to generate a more physically consistent outcome. In tests on synthetic and real videos, the authors report that the method preserved scene dynamics better than earlier video object removal approaches.

OpenAI Outlines Industrial Policy Ideas to Keep People First in the Intelligence Age

A new April 2026 policy paper argues that the shift toward increasingly powerful AI, and eventually “superintelligence,” could bring major gains in science, medicine, productivity, and lower consumer costs, but also serious risks such as job disruption, misuse, loss of control, and concentration of wealth and power. The document says current policy tools are not enough and calls for a new industrial policy focused on three goals: sharing AI-driven prosperity broadly, reducing safety and security risks, and expanding public access and agency. It points to past U.S. responses to major technological change, such as labor protections and social safety nets, as a model for updating today’s social contract. The paper also says AI data centers should cover their own energy costs and deliver local jobs and tax revenue, while governments should adopt practical AI rules that protect the public without stifling innovation.

Study Finds AI Regulation Is Shaped by Metaphors Like Intelligence, Black Box, and Hallucinations

A new academic paper argues that AI regulation is being shaped by misleading metaphors built into everyday language about the technology. It says terms like “intelligence,” “black box,” and “hallucinations” push lawmakers and the public toward flawed assumptions, such as treating AI systems as human-like agents or focusing too narrowly on model opacity. The paper contends that these framings can distort accountability by hiding the role of design choices, deployment contexts, and broader sociotechnical systems. Instead, it calls for simpler and more accurate regulatory language that reflects how today’s AI systems actually work and where their risks come from.

About SoRAI: SoRAI is committed to advancing AI literacy through practical, accessible, and high-quality education. Our programs emphasize responsible AI use, equipping learners with the skills to anticipate and mitigate risks effectively. Our flagship AIGP certification courses, built on real-world experience, drive AI governance education with innovative, human-centric approaches, laying the foundation for quantifying AI governance literacy. Subscribe to our free newsletter to stay ahead of the AI Governance curve.