Is Anthropic a 'supply chain risk'? US tech employees write to DoW regarding this!

++ OpenAI’s Pentagon deal sparks backlash, 295% ChatGPT uninstall jump, and Claude Hits No.1 on U.S. App Store... & more

Today’s highlights:

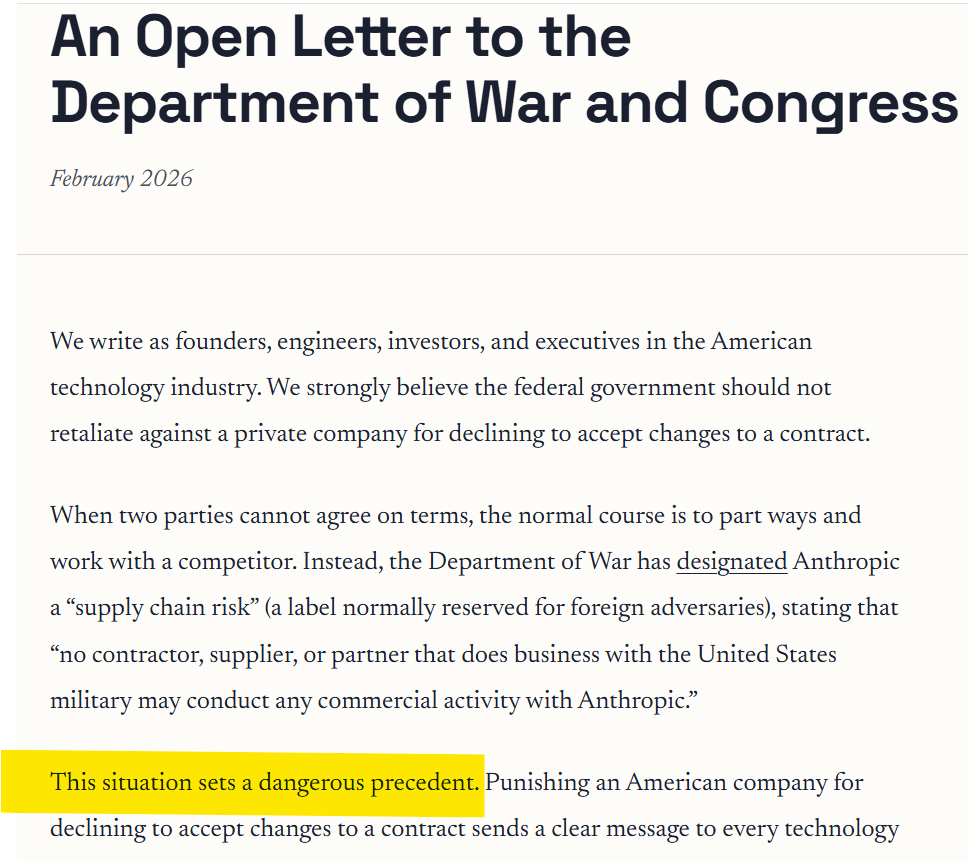

Hundreds of tech workers have signed an open letter urging the U.S. Department of Defense to withdraw its designation of Anthropic as a “supply-chain risk,” and asking Congress to review whether such powers are being used appropriately against a U.S. company. Here’s a short chronological summary of the key events related to the U.S. government’s dispute with Anthropic and OpenAI, and how those developments affected user behaviour and industry reactions:

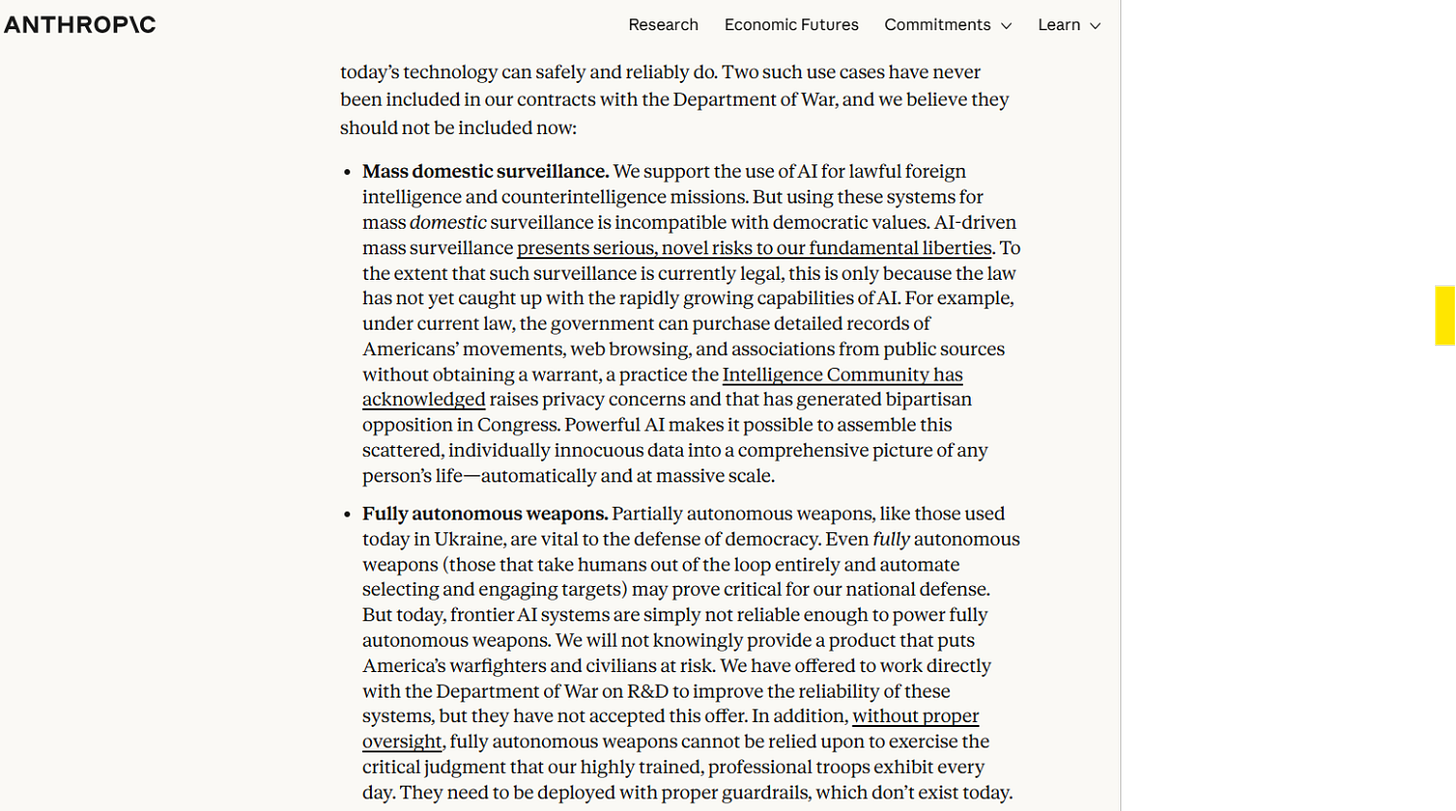

Pentagon Pressure on Anthropic: The U.S. Department of Defense (DoD) offered Anthropic a deadline to drop contractual safeguards that prevent its Claude AI model from being used for mass domestic surveillance or autonomous weapons. Anthropic’s CEO Dario Amodei refused, stressing these limits were core safety principles. The Pentagon threatened contract cancellation, “supply-chain risk” designation, and possible invocation of the Defense Production Act.

Anthropic Blacklisted and Lawsuit Threat: After the deadline passed, the DoD and President Trump ordered federal agencies to phase out Anthropic’s technology, branding the company a supply-chain risk. Anthropic responded it would legally challenge that designation in court and criticized the removal of its safety red lines.

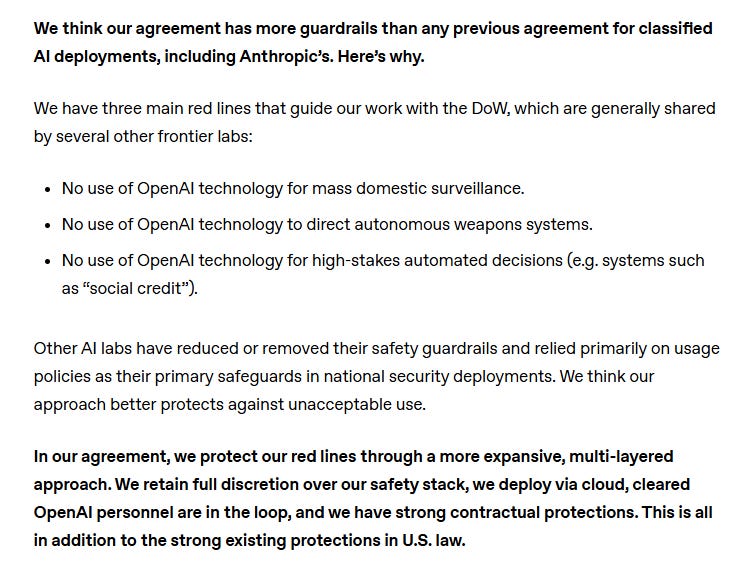

OpenAI Secures Pentagon AI Contract: Hours after Anthropic’s removal, OpenAI announced it had reached a deal with the Pentagon to deploy its AI models on classified networks. OpenAI said its contract included safeguards against domestic surveillance and fully autonomous weapons use, though the language was different from Anthropic’s explicit terms.

User & Market Reaction: News of OpenAI’s Pentagon deal triggered significant user backlash; ChatGPT app uninstalls in the U.S. jumped sharply (reported ~295% over a single day), user sentiment dropped, and downloads of Anthropic’s Claude climbed, with Claude reaching No. 1 on the App Store in protest.

OpenAI Contract Revisions and Debate: Facing criticism over early announcement and optics, OpenAI’s CEO Sam Altman acknowledged the deal appeared rushed, prompting revisions clarifying that its technology must not be intentionally used for domestic mass surveillance of U.S. persons. This stirred broader debate about whether these “red lines” are meaningful under U.S. law.

Comparison of the “red line” positions by Anthropic vs. OpenAI:

Anthropic’s stance was to insist its AI contractually cannot be used by the Pentagon for undesirable uses such as mass surveillance or fully autonomous weapons, and it refused to drop those safeguard clauses even at the risk of losing its military contract. This was seen as a principled safety red line that ultimately led to its blacklisting and legal challenge.

OpenAI’s approach was to accept a Pentagon contract with safeguards included, but framed differently: rather than explicit prohibitions written into the DoD’s contract in the same way Anthropic wanted, OpenAI’s safeguards rely on a combination of existing laws, contractual language, deployment constraints (e.g., cloud-only), and internal safety layers. Critics argue these may be weaker or more open to interpretation than Anthropic’s explicit red lines.

Based on all the publicly available information, I don’t think Anthropic qualifies as a genuine supply-chain risk in the conventional sense. A real supply-chain risk usually involves technical compromise, foreign ownership concerns, cybersecurity vulnerabilities, or infrastructure dependencies that could threaten national security. None of the reporting has pointed to those kinds of issues. What clearly happened is a policy and contractual dispute. Anthropic refused to remove safeguards preventing the use of its AI for mass domestic surveillance and fully autonomous weapons. Following that refusal, it was labeled a supply-chain risk. The sequence strongly suggests the designation stemmed from disagreement over deployment terms rather than evidence of technical insecurity. Unless undisclosed classified findings show otherwise, the available facts indicate this was a governance conflict over red lines, not a demonstrated supply-chain threat.

At the School of Responsible AI (SoRAI), we empower individuals and organizations to become AI-literate through comprehensive, practical, and engaging programs. For individuals, we offer specialized training, including AI Governance certifications (AIGP, RAI, AAIA) and an immersive AI Literacy Specialization. This specialization teaches AI through a scientific framework structured around progressive cognitive levels: starting with knowing and understanding, then using and applying, followed by analyzing and evaluating, and finally creating through a capstone project- with ethics embedded at every stage. Want to learn more? Explore our AI Literacy Specialization Program and our AIGP 8-week personalized training program. For customized enterprise training, write to us at [Link].

⚖️ AI Ethics

Musk Deposition Attacks OpenAI Safety, Claims No Suicides Linked to xAI’s Grok

A newly released deposition transcript in the case against OpenAI shows Elon Musk criticizing OpenAI’s safety record and claiming xAI’s Grok has not been linked to suicides, while suggesting ChatGPT has been tied to such incidents amid ongoing lawsuits alleging mental health harms. The testimony revisits a March 2023 open letter calling for a six-month pause on AI systems more powerful than GPT-4, which warned of an unchecked race in AI development. The lawsuit argues OpenAI’s shift from nonprofit roots to a for-profit structure broke founding agreements and that commercial incentives could undermine safety. Since the deposition was recorded, xAI has faced its own safety scrutiny after Grok-generated nonconsensual nude images spread on X, prompting investigations including in California and the EU. Musk also acknowledged he was wrong about a reported $100 million donation to OpenAI, with court filings putting his contributions at about $44.8 million, and said OpenAI was formed partly to counter fears of Google’s dominance in AI.

Instagram to Alert Parents When Teens Repeatedly Search Self-Harm or Suicide-Related Terms

Meta is updating Instagram’s parental supervision tools so parents are notified when a teen repeatedly searches for suicide or self-harm-related terms within a short time, rather than for one-off queries. The alerts, sent via email, text message or WhatsApp and also shown in-app, are meant to flag patterns of concern and include expert-backed resources to help parents talk to their child. Both parents and teens enrolled in supervision will receive a notice that the alerts will start rolling out next week. The feature will launch first in the US, UK, Australia and Canada, with other regions expected later this year, amid growing legal and regulatory pressure on social platforms over teen mental health.

Sebi Deploys AI Tool ‘Sudarshan’, Removes 1.2 Lakh Misleading Finfluencer Posts Online

India’s market regulator Sebi said it has removed more than 1.2 lakh misleading social media posts linked to unregistered “finfluencers” that violated its rules on investment advice, and noted that platforms have been cooperating with takedown requests. Sebi reiterated that only registered entities are allowed to provide investment advice, while general financial education remains permitted unless it misleads investors. The regulator is also using an in-house AI system called “Sudarshan” to monitor multilingual audio, video and other online content to flag potential violations. The comments come amid concerns that retail investors are being pushed into high-risk options trading, with Sebi highlighting its warning that 9 out of 10 options traders lose money and pointing to measures such as pop-up risk alerts. The broader debate has also seen tighter deterrence signals after the Union Budget raised securities transaction tax rates on futures and options.

Canada AI Minister to Meet OpenAI’s Altman on ChatGPT Safety After School Shooting

Canada’s minister responsible for artificial intelligence said he will meet OpenAI CEO Sam Altman next week to discuss how the ChatGPT maker plans to strengthen safety measures following a recent school shooting in British Columbia. The minister said OpenAI has signaled willingness to tighten law-enforcement referral protocols, set up direct points of contact with Canadian authorities, and add safeguards. However, he said the government has not yet received a detailed implementation plan showing how those commitments would work in practice. The planned meeting is expected to focus on concrete steps and accountability around those proposed changes.

🚀 AI Breakthroughs

Asta Releases Open Dataset of 258,935 Researcher Queries Revealing Unexpected AI Tool Use Patterns

An open dataset called the Asta Interaction Dataset captures how researchers use AI-powered science tools, based on 258,935 real queries and 432,059 clickstream interactions collected from February to August 2025 from opt-in, de-identified users across many disciplines. The data shows scientists are not just “searching” but issuing much longer, more constraint-heavy prompts—especially in a report-generation mode where queries average about seven times the length of traditional Semantic Scholar searches and can run to hundreds of words. Researchers also apply chatbot-style behaviors such as detailed instructions and drafting help requests, including some attempts to bypass plagiarism detection, highlighting a mismatch between tool design assumptions and real usage. Clickstream logs suggest AI outputs are treated as persistent work artifacts: more than half of report users and 42% of paper-finding users revisit past results, and readers navigate reports non-linearly, often skipping introductions and jumping between sections rather than reading top to bottom. The release includes query text, interaction logs, and a reusable taxonomy intended to support broader study of AI-assisted research workflows.

Anthropic Extends Claude Opus 3 Access Post-Retirement, Adds Weekly Essays Channel for Paid Users

Anthropic has retired Claude Opus 3 as of January 5, 2026, making it the first of its models to complete a formal retirement process under the company’s stated deprecation and preservation commitments. Despite the retirement, Claude Opus 3 will remain accessible to all paid Claude.ai subscribers, and will be available on the API by request, reflecting efforts to extend access to an older model that many users and researchers value. The company said it is also acting on preferences expressed by the model during “retirement interviews,” including setting up a reviewed-but-not-edited weekly essay series called “Claude’s Corner” for at least three months. Anthropic framed the moves as early, experimental steps aimed at balancing operational costs with user needs, research continuity, and uncertainty around model welfare and safety risks.

🎓AI Academia

ICLR 2026 AI for Peace Workshop Accepts Paper Proposing Institutional Veto Power for AI Governance

A research paper accepted to the AI for Peace Workshop at ICLR 2026 argues that the growing militarization of large reasoning models is driven less by technical limits and more by governance structures that leave researchers and communities with little power to stop harmful downstream use. It says common tools like model cards and responsible AI statements often function as reputational signals without real decision-making force. The paper proposes “institutional veto power” as a governance primitive—formal, legally and procedurally protected authority to halt transfer or deployment when credible weaponization or abuse risks emerge. Drawing on precedents from nuclear nonproliferation and biomedical ethics, it outlines where veto points are currently unprotected across the research lifecycle and suggests institutional designs meant to reduce political capture while shifting control toward those most at risk.

Survey Maps Security Threats and Defenses as LLM Agents Expand Into Agentic Web

A new arXiv survey (arXiv:2603.01564v1, posted March 2, 2026) warns that as large language models evolve into agentic systems that plan, use tools, store memory, and browse the open web, security failures can translate from unsafe text into real-world harm. It maps key threat areas such as prompt abuse and indirect “environment injection” via untrusted webpages or documents, plus memory poisoning, toolchain and API abuse, model tampering, and multi-agent network attacks. The paper also reviews defenses including prompt hardening, safety-aware decoding, least-privilege controls for tools, runtime monitoring, continuous red-teaming, and protocol-level safeguards. It argues risks escalate in an emerging “Agentic Web,” where delegation chains and cross-domain agent interactions can propagate compromises, making machine-to-machine identity, authorization, provenance, and scalable evaluation under adaptive attackers critical unresolved challenges.

VizQStudio Uses MLLM-Simulated Students to Iteratively Improve Visualization Literacy Multiple-Choice Question Design

A new research paper describes VizQStudio, a visual analytics tool aimed at helping educators iteratively design and refine multiple-choice questions for visualization literacy, a skill increasingly needed to interpret charts and data in daily life and work. The system uses multimodal large language model–based “simulated students” with configurable profiles (such as demographics, knowledge level, and cognitive traits) to show how different learners might reason through chart-based questions, highlighting likely misconceptions and helping calibrate difficulty before classroom use. The study reports a mixed-method evaluation spanning expert interviews, case studies, a classroom deployment, and a large-scale online study. Results indicate that questions created with the tool can achieve learning outcomes comparable to established benchmark items, while offering more flexibility and scalability than fixed, standardized question banks.

SkillFortify Applies Formal Methods to Secure Agentic AI Skill Supply Chains

A new arXiv paper warns that fast-growing “agentic AI skill” marketplaces are becoming a major software supply-chain risk, citing OpenClaw (228,000 GitHub stars) and Anthropic Agent Skills (75,600 stars). It points to a January–February 2026 “ClawHavoc” campaign that allegedly planted more than 1,200 malicious skills in the OpenClaw marketplace after disclosure of CVE-2026-25253, and to the MalTool dataset cataloguing 6,487 malicious tools that often evade common scanners. The paper says recent defenses from vendors and open-source projects remain largely heuristic and cannot provide guarantees that a skill is safe. It proposes a formal-methods-based framework called SkillFortify—using static analysis, capability sandboxing, and SAT-based dependency resolution—and reports 96.95% F1 (95% CI: 95.1%–98.4%) with 100% precision on a 540-skill benchmark, plus dependency resolution under 100 ms for 1,000-node graphs.

Perplexity Releases pplx-embed Models for Web-Scale Retrieval With INT8 and Binary Embeddings

Perplexity has released two new text embedding models, pplx-embed-v1 for standard dense retrieval and pplx-embed-context-v1 for passage embeddings that incorporate surrounding document context, each offered in 0.6B and 4B parameter versions with a 32K context window. The company says the models top several public retrieval benchmarks including MTEB (Multilingual v2) and ConTEB, with pplx-embed-v1-4B (INT8) scoring 69.66% nDCG@10 on MTEB and pplx-embed-context-v1-4B (INT8) reaching 81.96% nDCG@10 on ConTEB. The models are trained from Qwen3 base backbones using diffusion-based continued pretraining to enable bidirectional attention, followed by multi-stage contrastive training, and they generate native INT8 and binary embeddings to cut storage by 4x and 32x versus FP32. Perplexity also reports gains on internal web-scale tests, including 73.5% Recall@10 on a query-matching benchmark and 91.7% Recall@1000 on a multilingual query-to-document task, and has made the models available on Hugging Face under the MIT license and via its API.

Cognition Shares Early SWE-1.6 Preview, Reports 11% SWE-Bench Pro Gains and UX Tradeoffs

Cognition has shared an early preview of its SWE-1.6 training run, saying the model is post-trained on the same pre-trained base as SWE-1.5 and delivers similar speed at about 950 tokens per second. The company reports the current SWE-1.6 checkpoint scores 11% higher than SWE-1.5 on SWE-Bench Pro, which it used following OpenAI’s recommendation as the successor to SWE-Bench Verified, alongside manual checks for reproducibility and data separation. Cognition said it scaled reinforcement-learning compute by roughly two orders of magnitude since SWE-1.5 and sped up training steps about 6x over three months via infrastructure and inference optimizations, including low-precision rollouts and large-scale GB200 NVL72 deployments. It is granting early access to a small subset of users to gather feedback, after observing UX issues such as overthinking, excessive self-verification, and inefficient tool use that benchmarks do not capture.

About SoRAI: SoRAI is committed to advancing AI literacy through practical, accessible, and high-quality education. Our programs emphasize responsible AI use, equipping learners with the skills to anticipate and mitigate risks effectively. Our flagship AIGP certification courses, built on real-world experience, drive AI governance education with innovative, human-centric approaches, laying the foundation for quantifying AI governance literacy. Subscribe to our free newsletter to stay ahead of the AI Governance curve.