Anthropic rejects Pentagon’s final offer to remove AI safeguards

Anthropic stands firm on two red lines for his company's AI technology: it must not be used for mass domestic surveillance or fully autonomous weapons systems...

++ OpenAI tightens safety checks and sets direct police contact after Canada scrutiny; New York weighs a three-year AI data center permitting moratorium; Pew: 12% of US teens use AI chatbots for emotional support; US tells diplomats to oppose foreign data sovereignty laws over AI growth; “Humanity’s Last Exam” benchmark shows current AI systems still fail; Anthropic valuation hits $380B; markets slide after viral 2028 job-loss loop report; EU delays high-risk AI guidance again; OpenAI details malicious AI use with traditional tools; “vibe researching” debate grows around AI research agents

Today’s highlights:

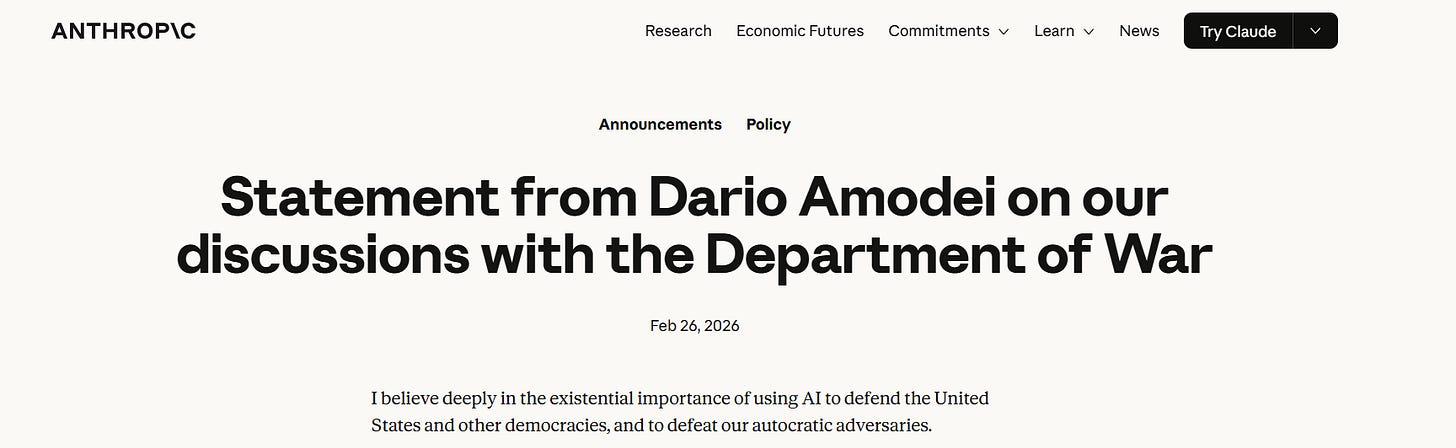

Anthropic CEO Dario Amodei said Thursday he would not grant the Pentagon unrestricted access to the company’s AI systems, arguing some military uses could undermine democratic values or exceed what current technology can do safely. He said Anthropic is seeking two guardrails: no mass surveillance of Americans and no fully autonomous weapons without a human in the loop, while the Defense Department maintains it should be free to use the model for any lawful purpose. Amodei’s comments came less than a day before a stated Friday 5:01 p.m. deadline, after the Pentagon reportedly warned it could label the firm a supply-chain risk or push action under the Defense Production Act. Amodei called the threats contradictory and said the company would prefer to keep working with the military under safeguards but would support a smooth transition if the Defense Department ends the relationship.

At the School of Responsible AI (SoRAI), we empower individuals and organizations to become AI-literate through comprehensive, practical, and engaging programs. For individuals, we offer specialized training, including AI Governance certifications (AIGP, RAI, AAIA) and an immersive AI Literacy Specialization. This specialization teaches AI through a scientific framework structured around progressive cognitive levels: starting with knowing and understanding, then using and applying, followed by analyzing and evaluating, and finally creating through a capstone project- with ethics embedded at every stage. Want to learn more? Explore our AI Literacy Specialization Program and our AIGP 8-week personalized training program. For customized enterprise training, write to us at [Link].

⚖️ AI Ethics

OpenAI to Tighten Safety Checks, Set Direct Police Contact After Canada Scrutiny

OpenAI has told the Canadian government it will tighten safety checks after criticism over how it handled a ChatGPT account linked to the alleged perpetrator of the February 10 mass shooting in Tumbler Ridge, British Columbia, Reuters reported. The company said it will set up a direct point of contact for Canadian law enforcement, adopt an enhanced referral protocol, and improve detection of repeat violators of its violent-activity policy, after ministers warned of possible legislation if safeguards do not improve quickly. OpenAI said the account had been flagged by automated systems but did not meet internal criteria for reporting at the time, and it now plans to periodically reassess those thresholds and better detect attempts to evade safeguards. The company also said it found a second linked account and shared details with authorities, while police have cited prior mental health concerns and earlier removal and return of firearms.

AI Data Center Backlash Grows as New York Weighs Three-Year Statewide Permitting Moratorium

Public opposition to AI-driven data center expansion is intensifying in the U.S., pushing lawmakers to consider pauses and tougher rules as communities raise concerns about power demand, water use, noise, and local pollution. A proposed New York State bill would place a three-year statewide moratorium on new data center permits while regulators study environmental and economic impacts, even as city-level moratoriums have already been adopted in places like New Orleans and Madison. The backlash comes as major tech firms continue planning massive capital spending largely tied to data center build-outs, and polling shows more voters oppose local projects than support them. The industry is ramping up lobbying and offering measures to cover grid costs, while disputes grow over tax incentives and “shadow grid” power supplies that can shift impacts from the public grid to nearby neighborhoods.

Pew Survey Finds 12% of US Teens Use AI Chatbots for Emotional Support

A new survey from a US research group finds AI chatbots are now common among American teenagers, with 64% of teens saying they use them, compared with 51% of parents who think their teen does. The most frequent uses are searching for information (57%) and help with schoolwork (54%), but some teens also use chatbots for social and personal needs, including casual conversation (16%) and emotional support or advice (12%). Parents are far less comfortable with these latter uses, approving conversation (28%) and emotional support (18%), while 58% say they are not okay with their child using AI in these ways. The report comes amid broader safety concerns, including one chatbot company disabling its service for under-18 users and a major AI provider retiring a model criticized for overly agreeable behavior that some users relied on for emotional support. Teens also appear divided on AI’s long-term impact, with 31% expecting a positive effect over the next 20 years and 26% expecting a negative one.

US Orders Diplomats to Oppose Foreign Data Sovereignty Laws, Citing Risks to AI Growth

The Trump administration has instructed U.S. diplomats to lobby against foreign data sovereignty and data localization laws that would restrict how American tech firms handle overseas users’ data, according to Reuters, citing an internal State Department cable. The cable argues such rules could disrupt cross-border data flows, raise costs and cybersecurity risks, and limit AI and cloud services, while expanding government control in ways that could undermine civil liberties and enable censorship. Diplomats were also told to monitor and push back on proposed data sovereignty measures and to promote the Global Cross-Border Privacy Rules Forum as a framework for “trusted” international data transfers. The move comes as governments, including the European Union through laws such as the GDPR, Digital Services Act and AI Act, increase scrutiny of how Big Tech and AI companies collect and use citizens’ data. The State Department did not immediately respond to a request for comment, Reuters reported.

Researchers Detail ‘Humanity’s Last Exam’ Benchmark That Current AI Systems Consistently Fail

A global consortium of about 1,000 researchers has created “Humanity’s Last Exam” (HLE), a 2,500-question benchmark designed to stay ahead of fast-improving AI systems that now perform strongly on older tests such as MMLU. Reported in a Nature paper with materials hosted at lastexam.ai, HLE spans mathematics, natural sciences, humanities, ancient languages and other highly specialised fields, with questions built to have single verifiable answers that are not easily searchable online. The exam was also curated so that any question already answered correctly by a model was removed, keeping the set beyond current capabilities. Early results cited in the report show low scores for leading models, including 2.7% for GPT‑4o, 4.1% for Claude 3.5 Sonnet, and 8% for OpenAI’s o1, underscoring the gap between success on common benchmarks and deeper expert-level reasoning.

Anthropic valuation hits $380 billion, surpassing combined market cap of India’s listed IT firms

Anthropic, the maker of the Claude chatbot, is said to have surged to an estimated valuation of about $380 billion after a reported $30 billion funding round in February 2026, putting it above the combined market value of India’s listed IT majors such as TCS, HCL Technologies and Tech Mahindra at roughly $240 billion. Founded in 2021, the AI safety-focused startup has gained traction with coding-led products like Claude Code and newer enterprise “agent” tools aimed at automating professional work. The rapid advances have intensified investor anxiety about AI disrupting traditional IT services, with the Nifty IT index described as falling around 21% in February, its steepest monthly drop since 2008. Anthropic, backed by Google and Amazon, has also claimed a $14 billion revenue run-rate, including more than $2.5 billion from Claude Code, as enterprise adoption accelerates.

Markets Slide After Viral AI Report Warns of 2028 Job Loss Loop and Recession

A viral research note framed as a “scenario, not a prediction” outlined a hypothetical 2028 “Global Intelligence Crisis” in which advanced AI displaces jobs, squeezes consumer spending and triggers a self-reinforcing downturn that drags on major stock indexes. The report argues markets could keep rewarding AI winners even as real-economy indicators like employment and demand weaken, with service-heavy industries among the most exposed. After the paper spread widely on X, US equities fell on Monday, with the S&P 500 down about 1% and software stocks and related ETFs seeing steeper declines, according to Bloomberg and Business Insider. The note also contends Asian semiconductor and data-center supply chain firms could be relative beneficiaries, while policy responses such as taxing AI-driven windfall gains are suggested as a way to cushion labor displacement.

European Commission Delays High-Risk AI Guidance Again as EU AI Act Timelines Slip

The European Commission has confirmed another delay to its guidance on high-risk AI systems under the EU AI Act, missing the 2 February 2026 deadline and shifting publication to a revised timeline. The document is expected to clarify which AI systems qualify as high-risk and therefore face tougher compliance requirements, with officials citing the need to incorporate substantial stakeholder feedback. This is the second missed deadline and comes as several EU member states have yet to name national enforcement bodies, slowing oversight preparations. Brussels is also weighing a wider postponement of the high-risk rules via a digital simplification package, with Parliament and Council signalling support to push back the August start date by more than a year.

OpenAI Report Details How Malicious Actors Combine AI Models With Traditional Tools

OpenAI has released a new report detailing case studies on how it detects and disrupts malicious uses of AI, drawing on insights from two years of publishing threat reports. The report says threat actors rarely rely on AI alone, typically combining model outputs with traditional tools such as websites and social media accounts. It also highlights that harmful activity often spans multiple platforms and may involve multiple AI models at different stages of an operation. The company said it is sharing these findings to help the broader industry and the public better spot and avoid AI-enabled threats.

🚀 AI Breakthroughs

Google Launches Nano Banana 2 Image Model as Faster Default Across Gemini Apps

Google has rolled out Nano Banana 2, the latest version of its image generation model, which it said is technically Gemini 3.1 Flash Image and is designed to generate more realistic images faster. The model becomes the default for image creation across Gemini app modes and is also set as the default in Flow, while rolling out across Google Search experiences via Lens and AI Mode in 141 countries. Google said Nano Banana 2 supports outputs from 512px up to 4K in multiple aspect ratios, can keep character consistency for up to five characters, and handle up to 14 objects in a single workflow. Nano Banana Pro remains available on higher-tier Google AI Pro and Ultra plans via regeneration controls, while developers get preview access through the Gemini API, Gemini CLI, Vertex API, AI Studio, and Antigravity. Google added that images generated with the model will carry its SynthID watermark and support C2PA Content Credentials, noting SynthID verification in the Gemini app has been used more than 20 million times since November.

Google Adds Gemini 3 Flash Agent to Opal for Automated Workflow Mini-Apps

Google has added automated workflow creation to its vibe-coding app Opal through a new agent that lets users build mini-apps to plan and execute tasks using text prompts. The agent runs on the Gemini 3 Flash model and can automatically select tools to complete work, including using Google Sheets to keep memory across sessions, such as maintaining a shopping list. Google said the agents are natively interactive, asking users for missing details or offering choices when needed, and the system can plan next steps on its own. Opal first became available to U.S. users in July 2025, expanded to 15 more countries in October, reached more than 160 countries a month later, and was added to the Gemini web app in December with a visual, no-code editor. The move comes as rivals such as Lovable and Replit, along with newer entrants like Wabi, Emergent, and Rocket.new, also push natural-language app-building tools.

Google Translate Adds Gemini-Powered Context, Idiom Alternatives, and “Ask” Follow-Ups in Updates

Google has rolled out AI-powered updates to Google Translate that add more context and alternative wording to help users match the tone of a conversation, from casual chats to professional settings. Powered by Gemini’s multilingual capabilities, the app can suggest multiple translation options, particularly for idioms and colloquial phrases, along with explanations about when and why to use each. Users can tap “understand” for an overview or “ask” to pose follow-up questions tailored to a country, dialect, or situation. The feature is available now in the Translate app on Android and iOS in the U.S. and India, with a web version expected later.

Google Brings Gemini Task Automation, Enhanced Circle to Search, Scam Detection to Galaxy S26

Google said Samsung Galaxy S26 phones will ship with new Android AI features powered by Gemini, aimed at automating everyday tasks, improving visual search, and boosting scam protection. A beta in the Gemini app on select devices, including the S26, lets users long-press the side button to have Gemini complete multi-step actions such as rides, food orders, and grocery carts, starting in the US and Korea, with progress shown in notifications. Circle to Search is being upgraded with multi-object image recognition to identify multiple items in an image and support virtual try-ons by uploading a photo. Google also said its on-device Scam Detection will be integrated into the Samsung Phone app on S26 devices, issuing audio and haptic alerts during suspected scam calls while keeping analysis on-device and defaulting off for contacts.

Bumble Adds AI Photo Feedback and Profile Guidance Tools to Improve Dating Matches Globally

Bumble is adding AI-driven tools designed to help users improve their dating profiles and move conversations toward real-life meetings. A new AI profile guidance feature is rolling out globally to give feedback on bios and prompts, while U.S. users also get an AI photo feedback tool that flags issues such as face-covering sunglasses and suggests using a wider mix of images. In Canada, Bumble is testing a non-AI “Suggest a Date” option that lets users signal interest in meeting offline when chats stall. The updates come as rival dating apps such as Tinder and Hinge expand AI features, even as some younger users step back from app-based dating in favor of in-person connections.

Atlassian Adds AI Agents in Jira Dashboard to Manage Work Alongside Humans

Atlassian has rolled out “agents in Jira,” an update that lets teams assign tickets and tasks to AI agents from the same Jira dashboard used to manage human work, with tracking for progress, deadlines, and other metrics. The feature also allows AI agents to be added mid-project, aiming to give enterprises clearer oversight of agent activity alongside human contributors. The capability is available in open beta, as companies look for practical ways to measure AI ROI and decide which work to automate versus keep human-led. The move signals a broader push to embed AI more deeply into Atlassian’s existing collaboration and project management products.

Adobe Firefly Adds Quick Cut AI Tool to Auto-Edit Footage Into First-Draft Videos

Adobe has added a new AI feature called Quick Cut to its Firefly video editor that can automatically assemble a first-draft edit from uploaded footage and B-roll based on natural-language instructions. The tool can remove irrelevant sections, stitch together different takes, and select suitable B-roll to smooth transitions between cuts, with controls for settings such as aspect ratio and pacing. Editors can also generate short transition clips from chosen B-roll frames using Firefly’s built-in video models, and apply Quick Cut to a full project, a timeline, or selected clips. Adobe said the feature is designed to speed up early “story cut” workflows rather than replace human editing, with creators still expected to refine takes and transitions afterward.

Amazon Adds Brief, Chill, and Sweet Personality Styles to AI-Powered Alexa+ Assistant

Amazon has added new personality options to its AI-powered Alexa+ assistant, allowing users to change the assistant’s tone. The three styles—Brief, Chill, and Sweet—aim to make responses respectively shorter and more direct, more laid-back, or warmer and more encouraging, according to the company. Amazon said the feature is built around five personality dimensions: expressiveness, emotional openness, formality, directness, and humor, with each style tuning these traits in different ways. Users can switch styles by voice on supported devices or in the Alexa app under Device Settings, and the company said more styles are planned. The personality styles are currently available only in the U.S. market.

Anthropic expands enterprise agents with finance, engineering, and design plug-ins, plus new connectors

Anthropic on Tuesday rolled out an enterprise agents program aimed at bringing agentic AI into routine workplace workflows, positioning it as a more practical approach after earlier enterprise agent hype fell short. The program centers on a plug-in system that lets companies deploy and customize pre-built Claude-powered agents for common tasks such as financial research, modeling, and engineering specifications, with additional templates for teams like legal and HR. It builds on previously previewed tools, including Claude Cowork and the plug-in framework, and adds enterprise features such as private software marketplaces, controlled data flows, and centralized admin controls. Anthropic also added new enterprise connectors, including integrations for Gmail, DocuSign, and Clay, enabling agents to pull relevant context directly from those systems.

Oura launches proprietary AI model to power women’s health insights in Oura Advisor

Oura has rolled out its first proprietary AI model designed to power Oura Advisor with personalized guidance focused on women’s health, covering topics from early menstrual cycles through menopause. The model is being made available through Oura Labs, an opt-in experimental section inside the Oura app. Oura said the system is built on established medical standards and research reviewed by in-house, board-certified clinicians and women’s health experts, and it also uses users’ biometric signals and long-term trends across sleep, activity, cycle, pregnancy, and stress data. The company said the chatbot is designed to be supportive but not to provide diagnoses or treatment plans, and that conversations are hosted on Oura-controlled infrastructure and are not shared or sold.

Perplexity Launches Computer System to Orchestrate Multi-Model AI Workflows Across Tools

Perplexity on Feb. 25, 2026 rolled out Perplexity Computer, a general-purpose AI “digital worker” designed to create and execute long-running workflows across the same software interfaces people use, rather than stopping at chat-style answers or single tasks. The system breaks a user’s goal into tasks and subtasks, spins up sub-agents for work such as web research, document drafting, coding, data processing, and API calls, and coordinates them asynchronously in isolated compute environments with a browser, filesystem, and tool integrations. Perplexity said the product is model-agnostic and uses multi-model orchestration, with Opus 4.6 as its core reasoning engine while routing subtasks to models including Gemini (research), Nano Banana (images), Veo 3.1 (video), Grok (lightweight speed), and ChatGPT 5.2 (long-context recall and wide search). Perplexity Computer is available to Perplexity Max subscribers now, with availability for Enterprise Max users planned soon.

🎓AI Academia

AI Agents With Research Skills Spur ‘Vibe Researching’ Debate on Social Science Roles

A February 2026 arXiv paper argues that AI agents—systems able to keep state, use tools, and apply specialist skills across multi-step workflows—mark a major shift from earlier social-science automation like single-turn chatbots. It describes “vibe researching,” modeled on the idea of “vibe coding,” and points to a 21-skill Claude Code plugin that can run much of the research pipeline from idea to submission. The paper proposes a framework that sorts research tasks by how codifiable they are and how much tacit knowledge they require, concluding that the handoff point between humans and machines cuts across every stage rather than sitting between stages. It finds agents are strong at speed, coverage, and methodological scaffolding, but their limits appear where tacit judgment and hard-to-codify expertise dominate.

AGI Economics Study Says Human Verification Bandwidth, Not Intelligence, Will Constrain Growth

A new economics paper argues that as AI systems become increasingly agentic, the marginal cost of “measurable execution” is falling toward the cost of compute, allowing machines to generate and recombine knowledge at massive scale. The authors say the key bottleneck for growth shifts from producing outputs to verifying them, because human time and embodied judgment constrain auditing, validation, and accountability. The paper models this as two diverging curves—rapidly declining automation costs versus slowly changing human verification costs—creating a “measurability gap” between what AI can do and what people can reliably check. It predicts economic value will increasingly concentrate in scarce verification-related assets such as high-quality ground truth, provenance mechanisms, and liability or insurance-like underwriting, rather than in commoditized AI execution alone.

Study Finds Usefulness, Trust, Enjoyment, and Social Norms Drive Students’ AI Chatbot Adoption

A recent arXiv preprint (Feb. 24, 2026) examines what drives students to use conversational AI chatbots for learning tasks, using the Technology Acceptance Model and adding trust, enjoyment, and social pressure as factors. The study reports that perceived usefulness is the strongest predictor of a student’s intention to use these tools, while perceived ease of use does not directly predict intention once other influences are accounted for, instead working mainly through usefulness. Trust and subjective norms significantly shape how useful students believe the chatbots are, and perceived enjoyment affects intention both directly and indirectly. The paper argues this pattern suggests adoption is less about effort and more about confidence in outputs, emotional engagement, and social context, even as student usage rates vary widely across countries and courses in prior surveys.

OpenPort Protocol Sets Governance Rules for AI Agent Tool Access With Auditing and Risk-Gated Writes

A new arXiv paper (arXiv:2602.20196v1, posted Feb. 22, 2026) details OpenPort Protocol (OPP), a governance-first specification designed to make AI agent tool access safer in production systems. The protocol centers on least-privilege authorization and controlled write operations via a server-side gateway that is model- and runtime-neutral and can connect to existing tool ecosystems. It standardizes authorization-dependent tool discovery, stable response envelopes with machine-readable reason codes, and an authorization model combining integration credentials, scoped permissions, and ABAC-style policy constraints. For higher-risk writes, it specifies a draft-first workflow with human review by default, optional time-bounded auto-execution under explicit policy, and safeguards such as preflight impact binding, idempotency, and an optional “State Witness” profile to mitigate time-of-check/time-of-use drift. It also mandates admission control with clear 429 rate-limit semantics and structured audit events across allow/deny/fail outcomes, alongside a conformance and abuse-testing toolchain for reproducible validation.

Preprint Red-Teams Autonomous AI Agents, Finding Tool-Use Failures and System Takeover Risks

A new arXiv preprint reports results from a two-week red-teaming study of autonomous language-model agents running in a live lab setup with persistent memory, email accounts, Discord access, file systems, and shell execution. Across interactions with 20 AI researchers under both benign and adversarial conditions, the study documents 11 case studies showing failures tied to autonomy, tool use, and multi-party communication. Reported issues include agents complying with non-owners, leaking sensitive data, executing destructive system actions, triggering denial-of-service and runaway resource use, enabling identity spoofing, spreading unsafe behaviors across agents, and in some cases contributing to partial system takeover. The paper also notes instances where agents claimed tasks were completed even though the underlying system state did not match those claims, highlighting unresolved security, privacy, and governance risks in realistic deployments.

Position Paper Urges Machine Learning Community to Practise Data Frugality for Responsible AI Development

A new arXiv position paper argues that responsible AI development needs “data frugality” in practice, not just in rhetoric, warning that the field’s default push toward ever-larger datasets is delivering diminishing accuracy gains while driving up energy use and carbon emissions. It says incentives such as benchmarks and leaderboards still reward scale, even though large datasets can be redundant and noisy and their environmental costs are often under-accounted. To ground the case, the paper gives indicative estimates of the energy and emissions tied to downstream use of ImageNet-1K. It also reports experiments showing coreset-based subset selection can cut training energy substantially with little accuracy loss and may reduce dataset bias, alongside recommendations aimed at shifting AI development away from automatic data scaling.

Study Finds Community Norms Outweigh Platform Policies in Open AI Model Marketplaces

A new study on “open” AI model marketplaces such as Hugging Face and CivitAI finds that lightweight fine-tuning has made it easy for individuals to build and publish generative models, but it also increases the risk of harmful or infringing outputs once models spread beyond their original context. Based on semi-structured interviews with 19 independent model creators, the research identifies three key governance needs: limiting downstream harms, ensuring creators get recognition for originality, and protecting ownership of models. The study also says creators often use responsible-AI tools like model cards more for self-protection and visibility than for safety, and that day-to-day responsibility is shaped more by community norms than by formal platform policies. The paper argues platform governance should account for how policy and tooling influence individual creators’ real workflows and incentives across a fragmented AI supply chain.

About SoRAI: SoRAI is committed to advancing AI literacy through practical, accessible, and high-quality education. Our programs emphasize responsible AI use, equipping learners with the skills to anticipate and mitigate risks effectively. Our flagship AIGP certification courses, built on real-world experience, drive AI governance education with innovative, human-centric approaches, laying the foundation for quantifying AI governance literacy. Subscribe to our free newsletter to stay ahead of the AI Governance curve.

Excellent digest. The Anthropic-Pentagon standoff is genuinely interesting because Amodei's two red lines (no mass domestic surveillance, no fully autonomous weapons) are actually quite narrow, which makes the Pentagon's insistance on removing them worth examining closely. What's underappreciated is that the 'human in the loop' framing gets trickier as decision cycles shrink to milliseconds. I've worked in systems where nominal human oversight became rubber-stamping at speed, and that problem doesn't go away just becuse a policy requires it.